EU AI Act Compliance: Why On-Prem AI Is No Longer Optional for European Manufacturers

TL;DR: On-prem AI for European manufacturers is moving from preference to requirement. The European Commission says the AI Act is the first-ever legal framework on AI and that it entered into force on 1 August 2024. Oracle's push into AI agents shows that agentic workflow executi

TL;DR: On-prem AI for European manufacturers is moving from preference to requirement. The European Commission says the AI Act is the first-ever legal framework on AI and that it entered into force on 1 August 2024. Oracle's push into AI agents shows that agentic workflow execution is now mainstream enterprise software behavior. Nvidia's AI factory and enterprise AI infrastructure messaging says the deployment layer is becoming industrial too. Put those signals together and the conclusion is straightforward: if workflow AI is going to touch production support, quality, procurement, maintenance, or plant-adjacent service operations, it needs to run in infrastructure the manufacturer controls, with explicit policy, logging, and intervention paths.

That is why the old model is breaking. Manufacturers have spent years stacking custom ERP logic, MES glue code, ticketing rules, spreadsheet handoffs, and vendor integrations around systems that were never built to reason across changing evidence in real time. Now the workflow layer is becoming intelligent. Once that happens, boundary control stops being an IT preference and becomes an operating requirement.

If industrial AI can trigger actions, approve exceptions, or route plant-critical work, then deployment boundary is part of the control system.

Why is EU AI Act compliance pushing manufacturers toward on-prem AI?

Because the regulation changes the cost of pretending deployment architecture does not matter.

For years, enterprise software teams could separate policy talk from implementation reality. That split is harder to sustain once AI systems begin recommending, prioritizing, or executing work that affects production, quality, maintenance, procurement, safety-adjacent processes, or customer commitments. The European Commission's AI Act page matters here because it states two simple facts: this is the first-ever legal framework on AI, and it entered into force on 1 August 2024. That alone changes executive behavior. Leadership wants to know where the system runs, what it sees, what it can do, who can stop it, and what record exists after it acts.

Manufacturers already live inside environments full of audits, supplier obligations, cybersecurity pressure, and operational accountability. That means AI governance will not stay a model-card conversation for long. If a workflow model can route a maintenance case, approve an exception, or draft a supplier response, the boundary around that model becomes part of the operational control surface.

That is why cloud-only convenience starts to look weak. The issue is not that remote infrastructure is always bad. The issue is that manufacturers need inspectable boundaries around evidence gathering, execution rights, logging, and intervention. On-prem or customer-controlled deployment is often the cleanest answer because it keeps the judgment layer close to ERP, MES, QMS, ticketing, and document systems without turning every governance review into a negotiation with a vendor's black box.

Oracle's AI agents messaging is useful here for a different reason. It proves the market has moved beyond simple chatbot framing. Workflow AI is now being normalized at the suite level. Once that happens, manufacturers cannot keep asking whether AI will enter core operations. It already is. The real question is whether they will own the execution layer or rent it.

Direct answer: The EU AI Act pushes manufacturers toward on-prem AI because once AI participates in operational workflow, deployment boundary, logging, and intervention become governance requirements rather than technical preferences.

What is broken in the legacy manufacturing automation stack?

The stack assumes that rules will stay stable longer than the business does. They do not.

Look at a typical industrial workflow that lives somewhere between ERP, MES, quality systems, service tools, email, and procurement. A part shortage appears. A supplier shipment slips. A maintenance issue interrupts a line. A quality deviation triggers documentation checks. A field-service signal changes the urgency of a spare-part decision. None of these events live neatly in one system, and none of them remain static long enough for old-school automation to handle gracefully. Yet many manufacturers still depend on a maze of ERP customizations, scripts, approval chains, macros, queue logic, and human escalations to keep things moving.

That approach fails in three predictable ways. First, the workflow logic gets trapped inside systems of record that are good at storing transactions but bad at assembling cross-system evidence. Second, the exception path becomes the real process, which means humans spend their day stitching together context from screens, PDFs, inboxes, and tribal knowledge. Third, every change becomes expensive because the logic is scattered across vendors, consultants, admins, and brittle integration jobs.

The compliance angle makes this worse. Once AI enters the stack, manufacturers do not just need automation. They need inspectable automation. They need to know which data was used, which policy applied, why the system made a recommendation, whether the action was allowed, and how a human can intervene. The old customization-heavy model is terrible at this because the reasoning path is fragmented. There is no clean workflow intelligence layer. There is only accumulated debt.

This is why "just add AI" to the current stack is a bad answer. If the evidence layer is messy, the policy layer is implicit, and the action rights are unclear, all AI does is amplify confusion faster. Nvidia's enterprise AI factory messaging matters because it signals that the market is taking production deployment seriously. Durable AI systems need durable operating environments. Manufacturing workflows need the same discipline.

Direct answer: The legacy stack is broken because it buries workflow logic across too many systems, making evidence, policy, and intervention too fragmented for governed AI execution.

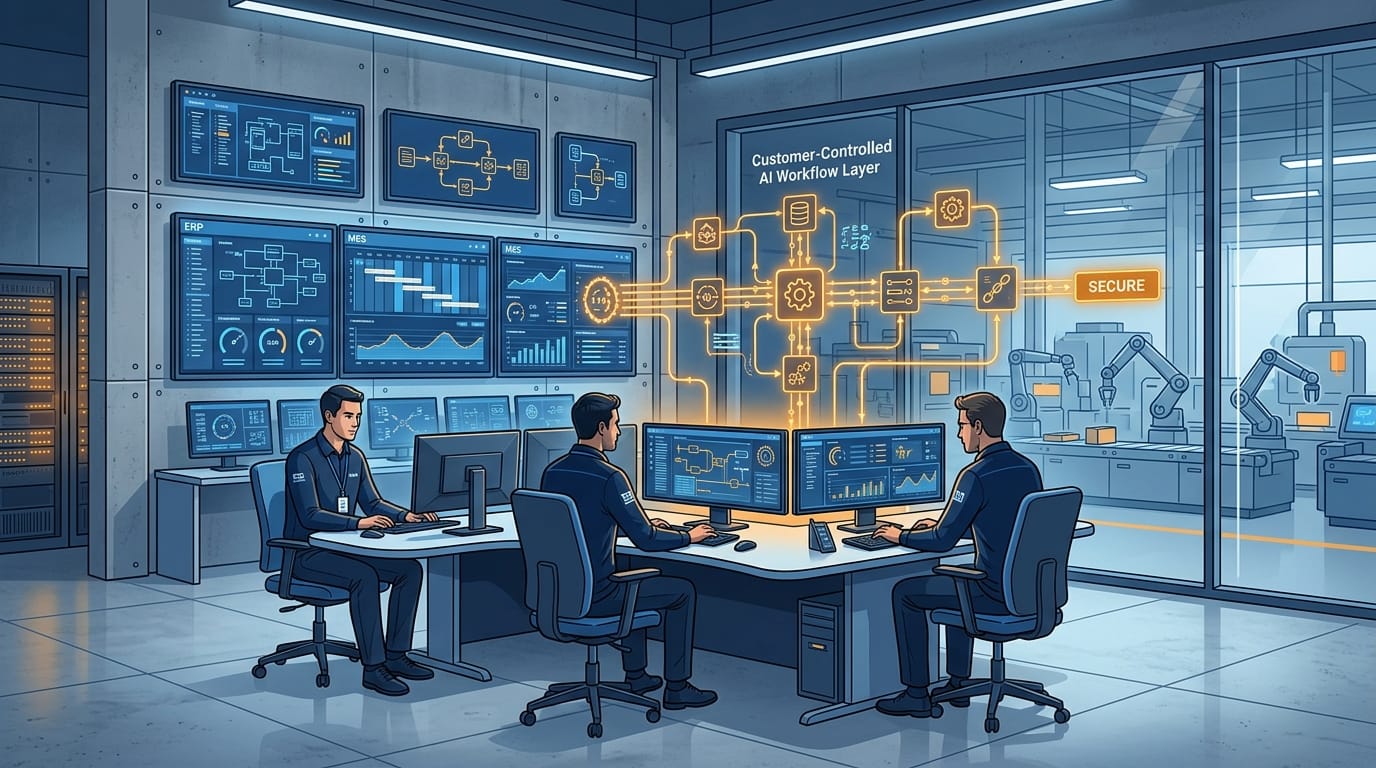

What does an AI-native, customer-controlled alternative actually look like?

It looks like a separate workflow intelligence layer running close to the systems of record, not buried inside them.

ERP, MES, QMS, PLM, service, and ticketing systems should keep doing what they are good at: storing core records, enforcing transactional integrity, and preserving operational history. The AI-native layer should do something different. It should gather evidence from those systems, build a decision packet, apply explicit policy, recommend or execute the next step, log what happened, and hand control back when the workflow requires a human. That is not a chatbot. It is an execution architecture.

The first component is connectors. If an AI workflow system only sees one application's fields, it will make shallow decisions. Industrial work depends on context from multiple systems: machine events, maintenance history, supplier commitments, quality records, open tickets, approvals, security roles, and attached documents. This is why connector coverage across enterprise systems is not an integration footnote. It is the minimum requirement for useful operational judgment.

The second component is governed context assembly. A good system has to know what evidence is mandatory before it acts. Did a prior quality deviation change the threshold? Is a supplier under temporary scrutiny? Is the issue tied to a safety-adjacent asset? Does the action require dual approval? Is there a service-level exception? Which document version is authoritative? A workflow layer that cannot answer those questions should not execute anything. This is where customer-controlled AI matters. The company needs visibility into what context is assembled and why.

The third component is executable policy. Most manufacturers already have policy. What they lack is a clean way to turn policy into operational behavior without hard-coding every case into a brittle ERP branch. AI-native workflow systems should make policy explicit: what the model may infer, what it may draft, what it may execute, which thresholds require review, and which roles can override. Governance is not a review after the build. Governance is the build.

The fourth component is deployment boundary control. Nvidia's AI factory framing is helpful because it pushes teams to think in terms of persistent AI operating environments. For many European manufacturers, the right version of that environment sits inside infrastructure they control directly or inside a tightly bounded customer-owned environment. That keeps logs, data paths, model access, and intervention rights inside a reviewable perimeter. When InfraHive talks about customer-controlled workflow automation, this is the practical reason it matters. Own the workflow boundary and you own the operating leverage.

The fifth component is action. The goal is not a prettier dashboard. The goal is fewer humans acting as middleware between systems. A useful manufacturing workflow agent can draft a maintenance triage packet, prepare a supplier exception decision, escalate a quality issue with evidence attached, or route a plant-support case with the right policy context already assembled. It can work inside a governed lane without pretending that autonomy means a lack of control.

Direct answer: The AI-native alternative is a customer-controlled workflow layer that connects to industrial systems, assembles governed evidence, applies explicit policy, and takes logged actions inside a controlled boundary.

What does implementation reality look like in a real plant environment?

Usually three to four weeks for the first serious workflow, assuming the team starts narrow and resists the urge to boil the ocean.

The right first workflow is not the flashiest one. It is the ugliest one. Pick the process where humans are clearly compensating for software weakness: maintenance triage across ticketing and ERP, supplier exception handling, plant-support routing, quality documentation review, spare-parts prioritization, or service-case escalation that depends on multiple systems. These workflows already reveal the evidence gaps, policy ambiguity, and handoff friction that an AI-native layer can remove.

Week one should map reality, not PowerPoint. Which systems contain authoritative records? Which approvals happen off-system? What evidence does a supervisor actually look for before deciding? Which actions are safe to draft but not execute? Which exceptions recur every week? Most teams discover the documented process is cleaner than the real one. Good. Hidden mess is exactly what the old automation stack fails to handle, and exactly what a new workflow layer should expose.

Week two is about connectors, policy boundaries, and intervention design. Bring the relevant ERP, MES, QMS, ticketing, procurement, document, or security signals into one governed workflow environment. Define what the system can read. Define what it can write. Define thresholds for auto-action, mandatory review, and escalation. Define what gets logged and how operators can inspect a decision path. This is also the phase where security and compliance reviews become easier, because on-prem or customer-controlled deployment removes a lot of hand-waving. You can point to the boundary instead of apologizing for it.

Week three and four are limited rollout. One plant. One issue class. One exception band. One supplier cohort. Measure cycle time, human touches, escalation accuracy, rework, and intervention rate. Keep ERP or MES as the system of record if needed. Replace the workflow intelligence first. That migration path is much safer than trying to rip out core systems before the economics are proven.

The objections are predictable. "Our data is too messy." It is messy everywhere; that is why governed context assembly exists. "We already automated this." If staff still reconstruct context before acting, then no, you automated intake, not workflow. "We'll wait for the ERP vendor roadmap." That usually means waiting while internal operators continue doing the integration work by hand.

Direct answer: Real implementation starts with one ugly, high-friction workflow, wraps it in a customer-controlled evidence and policy layer, proves the metrics, then expands from there.

What results should manufacturers actually expect?

The first win is speed.

Cases move faster because the system arrives with evidence assembled. Analysts spend less time hunting for context. Approvers spend less time asking basic questions. Escalations improve because the workflow knows what threshold was crossed and what supporting material exists. Rework drops because logging, write-back, and intervention behavior are built in.

The second win is reuse. Once the company has connectors, policy primitives, review states, and audit patterns for one workflow, the next workflow becomes cheaper to deploy. That reuse is the real economic advantage. You are not buying one automation. You are building the conditions under which multiple automations become governable and fast to ship.

This is where MetricFlow and adjacent workflow infrastructure become useful reference points. The real value is not one clever model call. The value is a production environment where finance, operations, IT, and support can run on shared governed primitives. You can see the direction clearly in real customer workflow outcomes: the proof is fewer manual handoffs, better cycle times, and lower marginal cost for each additional workflow moved onto the same stack.

Direct answer: Expect faster decision cycles, fewer manual context-gathering steps, cleaner audit trails, and lower incremental cost as more workflows reuse the same controlled automation foundation.

What does this mean for European manufacturing leaders now?

It means AI procurement and workflow architecture can no longer be separated.

European manufacturers should stop evaluating AI only on model performance or demo quality. They should ask harder questions. Where does the workflow run? Who controls the data path? What can be inspected after an action? Which policies are explicit? How does a human intervene? Can the system stay inside a boundary the company actually governs? Those questions are no longer paranoia. They are table stakes.

The companies that move early will not just become more compliant. They will build faster operating systems for the factory and the back office. The next competitive gap in manufacturing will not come from prettier dashboards. It will come from who can route, decide, escalate, and execute faster without surrendering control of the process.

Direct answer: European manufacturing leaders should treat customer-controlled AI workflow infrastructure as a strategic capability, not as an implementation detail delegated to a vendor.

So what should a manufacturer do next?

Pick one workflow where your people are obviously acting as glue between systems. Rebuild that path with an inspectable AI workflow layer that runs inside a boundary you control. Keep your ERP and MES where they still make sense. Just stop pretending that more custom logic inside old software is a long-term answer.

If you want to see what customer-controlled workflow automation looks like in practice, start at https://infrahive.ai, review how InfraHive approaches security and deployment boundaries, and explore how this works for your stack. The winners will not be the manufacturers with the most AI pilots. They will be the ones that own the workflow layer.

Direct answer: Start with one compliance-sensitive, cross-system workflow, prove the operating and governance gains on your own infrastructure, then expand deliberately.

Frequently Asked Questions

Does on-prem AI mean replacing ERP or MES?

No. The safer first move is to keep ERP and MES as systems of record and replace the brittle workflow layer around them.

Why does the EU AI Act change deployment decisions?

Because once AI systems influence operational actions, companies need clearer control over logging, intervention, data paths, and policy enforcement.

What kinds of workflows should manufacturers start with?

Start with cross-system workflows that generate lots of manual context gathering, such as maintenance triage, supplier exceptions, quality documentation review, or plant-support routing.

Why is customer-controlled deployment better than a generic cloud copilot?

Because it gives the manufacturer tighter control over evidence access, execution rights, auditability, security review, and operational policy.

What is the biggest implementation mistake?

The biggest mistake is trying to transform the whole plant stack at once instead of proving one high-friction workflow first.