On-Prem AI Quality Control: Why European Manufacturers Are Rebuilding the Workflow, Not Buying Another Vision SaaS

TL;DR: On-prem AI quality control is becoming the practical answer for European manufacturers that want defect detection, remediation, and auditability in one system they control. Gartner said in March 2024 that 55% of supply chain organizations will invest in applications suppor

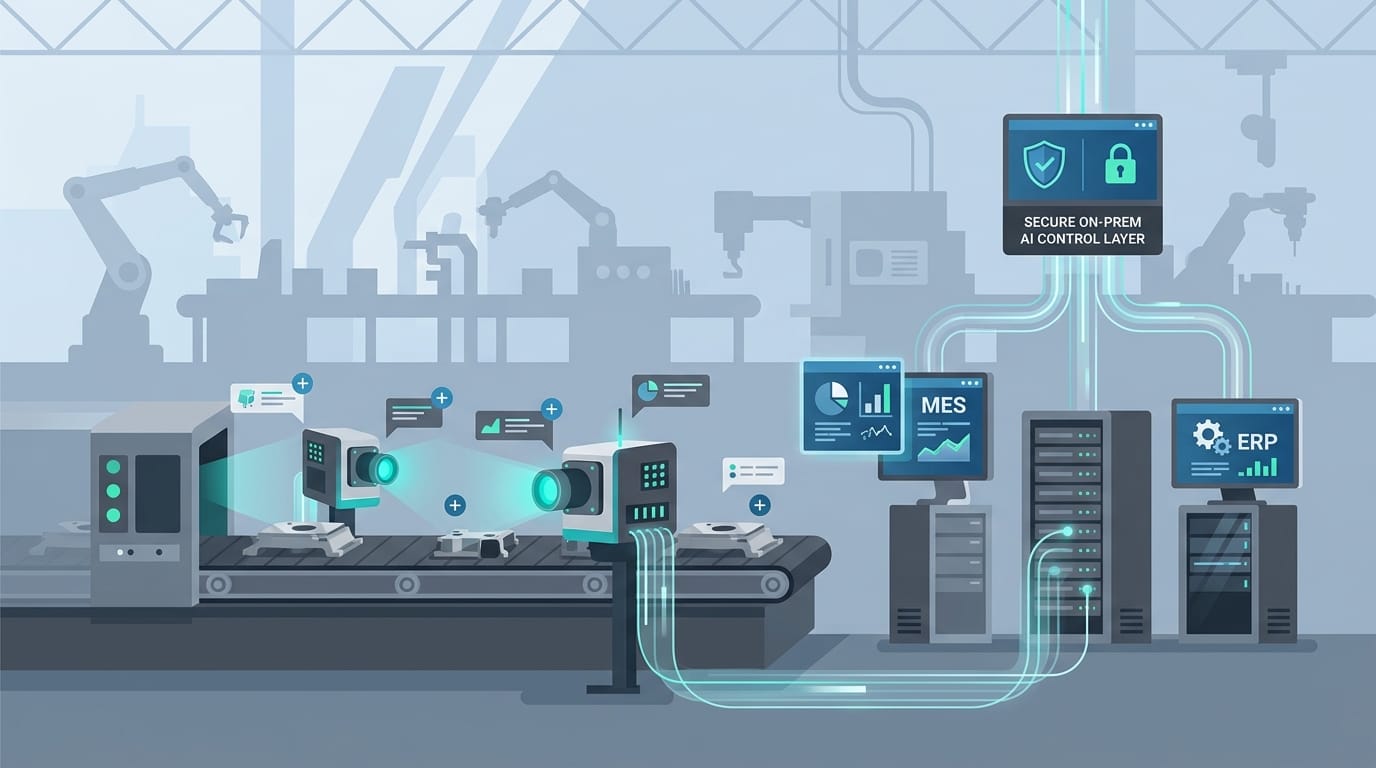

TL;DR: On-prem AI quality control is becoming the practical answer for European manufacturers that want defect detection, remediation, and auditability in one system they control. Gartner said in March 2024 that 55% of supply chain organizations will invest in applications supporting AI and advanced analytics by 2026, while the European Commission says the AI Act entered into force on 1 August 2024. The message is blunt: industrial AI is no longer a lab curiosity, but quality decisions cannot live in another black-box SaaS layer disconnected from MES, ERP, and plant governance.

Factories do not lose money because a model fails to draw a box around a defect. They lose money because the signal lands in the wrong place, too late, without context, and no one can turn it into a controlled action fast enough.

The next generation of manufacturing quality systems will not be judged by whether they can detect an anomaly. They will be judged by whether the manufacturer owns the workflow that decides what happens next.

Why is on-prem AI quality control suddenly worth rebuilding?

Because the pilot era has run out of excuses.

For the last several years, quality-control AI in manufacturing often meant a narrow computer-vision demo: install cameras, collect images, train a model, detect scratches, misalignments, missing parts, or packaging errors, then push alerts into a dashboard. Those pilots were useful, but most of them stopped exactly where the expensive work begins. Someone still had to decide whether the defect was real, which line or supplier lot was affected, whether production should slow or continue, whether the issue required rework, maintenance, quarantine, or a supplier claim, and how the cost should show up in downstream reporting.

That gap matters more now because industrial AI has moved from experimentation into mainstream budget planning. Gartner said in March 2024 that 55% of supply chain organizations will invest in applications that support AI and advanced analytics capabilities by 2026. At the same time, the European Commission says the AI Act is the first-ever legal framework on AI and that it entered into force on 1 August 2024. In other words, manufacturers are being pushed in two directions at once: spend seriously on AI and govern it seriously too.

If the answer is just “buy another hosted inspection tool,” the factory ends up with the same old problem in a nicer interface. Defect detection improves, but the operating logic still lives across MES workflows, ERP transactions, spreadsheets, email chains, supplier portals, and human judgment calls.

Direct answer: On-prem AI quality control is becoming urgent because manufacturers need production AI that can both act on plant evidence and stay inside a deployment boundary they can inspect, govern, and improve.

What is broken in the old quality-control stack?

Almost every handoff after the model makes a prediction.

The traditional stack is fragmented by design. Cameras and edge devices capture images. A quality application scores them. MES holds production context. ERP holds inventory, costing, and supplier information. Maintenance systems own machine history. Operators keep workarounds in notebooks or shift handovers. Engineers maintain thresholds in one place, inspectors override them in another, and procurement finds out about recurring supplier defects after the damage has already hit margin.

That setup survives when the line volume is manageable and experienced people act as the glue. It breaks when manufacturers want consistent decisions across plants, shifts, and product families. A defect alert without workflow context is just an expensive notification. It does not tell the plant whether to stop a line, quarantine a lot, reroute output, trigger a maintenance inspection, generate a supplier non-conformance, or escalate to a human reviewer with the right evidence.

This is also where the Europe-specific pressure shows up. Siemens has argued through its Manufacturing-X commentary that data sovereignty is becoming foundational for industrial competitiveness. That matters because a manufacturer is not just protecting images. It is protecting process know-how, quality thresholds, supplier performance patterns, exception logic, and operating rhythm. Once those decisions are mediated by a vendor-owned AI layer, the plant starts renting back pieces of its own judgment system.

The market signal from Microsoft and Siemens also points in the same direction. Their industrial AI collaboration made it clear that AI is moving closer to industrial software estates and real operations. But that does not automatically solve the workflow problem. Mainstream adoption without workflow redesign simply creates smarter alerts feeding the same brittle process chain.

Direct answer: The old quality stack fails because defect detection is separated from the governed actions that determine scrap, rework, supplier response, maintenance intervention, and financial impact.

What does an AI-native quality-control system actually look like?

Not another dashboard. A manufacturer-controlled workflow layer.

Keep the systems of record. Keep the cameras, PLC-connected data sources, MES, ERP, and quality databases that already matter. The architectural change is to add a control layer that can gather evidence from those systems, apply explicit policy, propose or execute the next action, and log the result in a way the manufacturer can audit later.

The first requirement is connector depth. Quality AI is useless if it sees only images. It needs the production order, machine state, operator station, tolerance profile, prior defect history, supplier lot, maintenance events, and downstream shipment risk. That is why deep operational connectors matter. Real inspection decisions are composite decisions. The model output is only one input.

The second requirement is policy. Manufacturers already have rules, but most of them are buried in SOPs, tribal memory, or software settings that were never meant to work together. Which defects can auto-quarantine a lot? Which ones require a second model or a human review? What confidence threshold changes by product family? When should the system trigger a maintenance check because the defect pattern suggests tool wear rather than supplier variation? Policy has to become executable. Otherwise the AI still depends on people to convert judgment into action.

The third requirement is deployment control. This is where on-prem changes the economics. When the quality workflow runs in an environment the manufacturer controls, the plant controls the data path, the model lifecycle, the intervention logic, and the audit trail. That matters for security, yes, but also for operating leverage. A team can iterate quickly without waiting for a vendor roadmap or shipping sensitive process data into another external surface. That is exactly why customer-controlled security and deployment boundaries matter in industrial AI.

The fourth requirement is a closed loop into operations and finance. Quality defects are not just production events. They hit scrap rates, throughput, supplier claims, customer commitments, warranty risk, and unit economics. A serious AI workflow therefore needs reporting and financial visibility tied to the same action layer. If the system is flagging defects but leadership cannot see the root-cause pattern, rework load, and cost movement, the plant still has a visibility problem. That is where a system like MetricFlow becomes useful: not as a vanity dashboard, but as the reporting spine that turns workflow decisions into measurable business impact.

InfraHive's natural place in this architecture is not “AI on top of manufacturing.” It is building AI-native workflow systems that sit across quality, ERP, maintenance, and support environments so the manufacturer owns the operational logic. A defect should not disappear into a vendor queue. It should enter a governed workflow that knows what to check, what policy applies, what action is allowed, and who needs to see the evidence.

Direct answer: An AI-native quality-control system is a manufacturer-controlled evidence, policy, and action layer that turns inspection signals into governed operational decisions across MES, ERP, maintenance, and supplier workflows.

What does implementation look like in the real world?

Usually eight to twelve weeks for the first serious workflow, not a multi-year “factory of the future” program.

The wrong starting point is “put AI into quality.” That is vague enough to waste a quarter. The right starting point is one recurring pain point where defects create expensive ambiguity. For example: false-positive inspection alerts on a high-volume line, delayed quarantine decisions, repetitive manual review of packaging defects, inconsistent supplier-lot escalation, or quality issues that are actually machine-condition problems in disguise.

Phase one is mapping the decision chain. Which system holds the image? Which one holds the tolerance spec? Where is the source of truth for lot genealogy, machine history, and production order context? Which actions can be automated safely, and which require human confirmation? This step usually reveals that the quality process is more fragmented than anyone wants to admit.

Phase two is boundary design. Keep existing systems of record, but let the AI workflow layer assemble the evidence packet and apply explicit policies. Some cases can be auto-routed. Some can open a human review screen with ranked evidence. Some can trigger immediate quarantine, and others can create a supplier case or maintenance task. The key is not full autonomy. The key is controlled autonomy with clear intervention paths.

Phase three is rollout. Start with one plant, one line, one defect family, or one supplier-sensitive process. Measure review latency, override rate, scrap avoidance, rework hours, and root-cause clarity. Expand only when the workflow proves it can reduce friction without adding hidden risk.

The common objections are predictable. “Our image data is messy.” Good. That means you should centralize evidence assembly instead of pretending the model alone is the product. “Our MES vendor already has AI features.” Fine, but that rarely solves cross-system policy and enterprise reporting. “We cannot replace everything.” You should not. The practical move is to replace the brittle decision layer first while keeping systems of record intact.

This is also where the forward-deployed engineer model wins. Manufacturing workflows differ by plant, product, tolerance, and operator reality. Generic templates are rarely enough. The fastest path is a close build-measure-iterate loop with the people who understand the line, the defect economics, and the constraints.

Direct answer: Start with one defect-driven workflow, keep your systems of record, and build a controlled AI layer around evidence assembly, policy execution, and human intervention.

What results should a plant expect?

First, faster and more consistent triage. Operators and engineers stop wasting time reconstructing context from multiple systems after every alert.

Second, fewer manual handoffs. Instead of bouncing among dashboards, spreadsheets, and inboxes, the defect enters a workflow that already knows the line context, supplier lot, tolerance profile, and allowed actions.

Third, better root-cause tracking. Because the workflow ties visual evidence to maintenance, process, and supplier signals, the factory can distinguish recurring machine drift from incoming material issues or operator variance much earlier.

Fourth, stronger financial visibility. Scrap, rework, claims, and throughput impact can be tied back to the same governed workflow rather than pieced together at month end. That matters far more than a demo accuracy number. The operational pattern is consistent with customer-controlled workflow automation results: fewer manual handoffs, faster cycle time, and a compounding payoff as more adjacent decisions move onto the same foundation.

Direct answer: Expect lower review latency, fewer avoidable handoffs, clearer root causes, and much better visibility into the real cost of quality decisions.

What does this mean for European manufacturers?

It means quality strategy is now infrastructure strategy.

European manufacturers do not just need accurate models. They need systems that can show where evidence came from, what policy was applied, what action was taken, and who could intervene. The AI Act and the broader sovereignty push do not create the need for better architecture; they expose it. Plants that keep quality logic fragmented across hosted tools and tribal workarounds will struggle to scale trustworthy automation. Plants that own the workflow layer will move faster because they can improve quality AI without giving up control of process knowledge or operating data.

Direct answer: The competitive edge is not merely seeing more defects. It is owning the quality workflow that decides how the factory responds.

So what should a manufacturing leader do next?

Pick one ugly quality process where your team still acts as middleware between inspection tools, MES, ERP, and supplier response. Rebuild that path as a governed AI workflow running in an environment you control. Keep the tools that still earn their place. Just stop treating defect detection as the finish line.

If you want to see what that architecture looks like in practice, start at https://infrahive.ai, review the security model for customer-controlled deployment, and explore how this works for your stack. The manufacturers that own their AI quality layer will not just catch problems faster. They will run tighter plants.

Direct answer: Build the workflow around the model now, because a vision pilot without controlled remediation is just a more expensive way to discover the same old bottlenecks.

Frequently Asked Questions

Does on-prem AI quality control mean replacing MES or ERP?

No. The practical move is to keep systems of record in place and add a governed AI workflow layer across them so inspection decisions stop depending on disconnected tools and manual handoffs.

What should a manufacturer automate first in quality control?

Start with one repetitive decision such as false-positive review, quarantine routing, supplier-lot escalation, rework triage, or machine-drift detection tied to visual defects.

Why does data sovereignty matter in manufacturing quality AI?

Because images are only part of the value. The real asset is the process knowledge, tolerance logic, supplier performance pattern, and remediation policy that define how the plant responds.

How is this different from a computer-vision inspection tool?

A vision tool detects conditions. An AI-native quality workflow governs what evidence is assembled, what action is allowed, which systems are updated, and when humans must intervene.

What is the biggest mistake manufacturers make with quality AI?

The biggest mistake is treating defect detection as the product while leaving the expensive operational judgment spread across spreadsheets, dashboards, emails, and tribal memory.