Why Manufacturers Are Rebuilding ERP Workflows Around On-Prem AI Agents

TL;DR: On-prem AI agents for manufacturing are replacing rigid ERP workflow layers because plants need faster exception handling, tighter data control, and systems that can act across MES, quality, maintenance, procurement, and finance without shipping operational logic into a ve

TL;DR: On-prem AI agents for manufacturing are replacing rigid ERP workflow layers because plants need faster exception handling, tighter data control, and systems that can act across MES, quality, maintenance, procurement, and finance without shipping operational logic into a vendor black box. Recent 2026 signals all point the same way: NVIDIA and partners showcased AI-driven manufacturing deployments at Hannover Messe 2026, Michigan State University said the inaugural Apple Manufacturing Academy Spring Forum explored the future of AI in manufacturing, Apple said its Manufacturing Academy is accelerating AI use in U.S. supply chains, NIST scheduled an Artificial Intelligence for Manufacturing workshop, and Express Computer reported that UiPath is pushing on-premises agentic AI deployment for enterprises via Automation Suite.

The market is leaving the pilot stage. The serious question is no longer whether factories will use AI. It is where the workflow intelligence and execution control will live once AI starts touching real operational work.

If your plant still depends on ERP screens, spreadsheets, and email chains to resolve production exceptions, you do not have a software problem anymore. You have a workflow-architecture problem.

Why are manufacturers pulling workflow logic out of ERP?

Because ERP was built to record transactions, not to make plant-speed judgments.

That distinction matters more than most software vendors admit. Systems like SAP, Oracle, and NetSuite are strong at persistence, controls, and canonical records. They are weak at handling the messy middle where manufacturing work actually stalls: supplier exceptions, maintenance triage, quality escapes, invoice mismatches, engineering-change fallout, expedites, and the endless stream of "this does not fit the template" decisions that operators make every day.

In most factories, those decisions still bounce between ERP modules, MES screens, shared inboxes, spreadsheets, maintenance systems, and tribal knowledge. The result is predictable. Planners wait for clarifications. Supervisors re-enter data. AP teams reconcile by hand. Maintenance coordinators chase approvals across three systems. Support teams answer questions that the software stack should already be able to resolve. ERP is still the system of record, but it becomes the wrong place to host living operational judgment.

The 2026 news cycle reinforces that this is no longer theoretical. NVIDIA and partners used Hannover Messe 2026 to showcase AI-driven manufacturing deployments. Michigan State University highlighted how the inaugural Apple Manufacturing Academy Spring Forum explored the future of AI in manufacturing. Apple separately said its Manufacturing Academy is accelerating AI use in U.S. supply chains. NIST scheduled an Artificial Intelligence for Manufacturing workshop, which is a neat way of saying the standards and trust questions are now serious enough to deserve formal attention. And Express Computer reported that UiPath is pushing on-premises agentic AI deployment for enterprises via Automation Suite, confirming that controlled deployment is becoming a buying criterion rather than a niche request.

Europe reads that through sovereignty and auditability. The US reads it through labor pressure and uptime. Same conclusion: AI is entering execution, and the old ERP-centered model is too rigid.

Direct answer: Manufacturers are pulling workflow logic out of ERP because the valuable work is no longer transaction entry. It is exception handling, prioritization, routing, and action across systems that ERP alone was never designed to orchestrate gracefully.

What is broken in the old ERP-centered operating model?

The problem is not that ERP exists. The problem is that too much judgment is trapped around it.

Take a common production exception. A late supplier shipment threatens a work order. Inventory says one thing, the planner spreadsheet says another, MES shows a machine window, quality has open non-conformance history on an alternate component, procurement has an updated ETA in email, and finance wants to avoid an expedite if possible. The ERP holds pieces of the truth, but not the actual decision workflow. So people compensate manually. They email. They call. They re-check screens. They create side lists. Then they push a final decision back into ERP as if the software had meaningfully supported the choice.

The same pattern shows up in maintenance. Work orders are opened, spare parts are checked, machine history is reviewed, technician availability changes, and root-cause notes live across maintenance software, MES, PDFs, and human memory. Or look at finance workflows inside manufacturing groups: invoice matching, goods-received discrepancies, chargeback disputes, and month-end close exceptions often still rely on people to interpret scattered evidence before any ERP transaction can be finalized.

This is why adding a generic AI copilot rarely solves the real issue. If the model can answer questions but cannot reach governed context from the right systems, apply business policy, and trigger actions safely, then it is just another surface sitting on top of the same bottleneck. Manufacturers do not need more interface layers. They need the decision path itself rebuilt.

The deeper problem is commercial as much as technical. Once the workflow layer sits inside a vendor-owned boundary, the manufacturer starts renting the logic that decides how operations run. That means renting connector coverage, approval design, traceability, and the cost of changing tools later.

Direct answer: The ERP-centered model breaks when decisions require cross-system context, local policy, and operational trade-offs in real time. That work lives around ERP, not inside its rigid transaction flows.

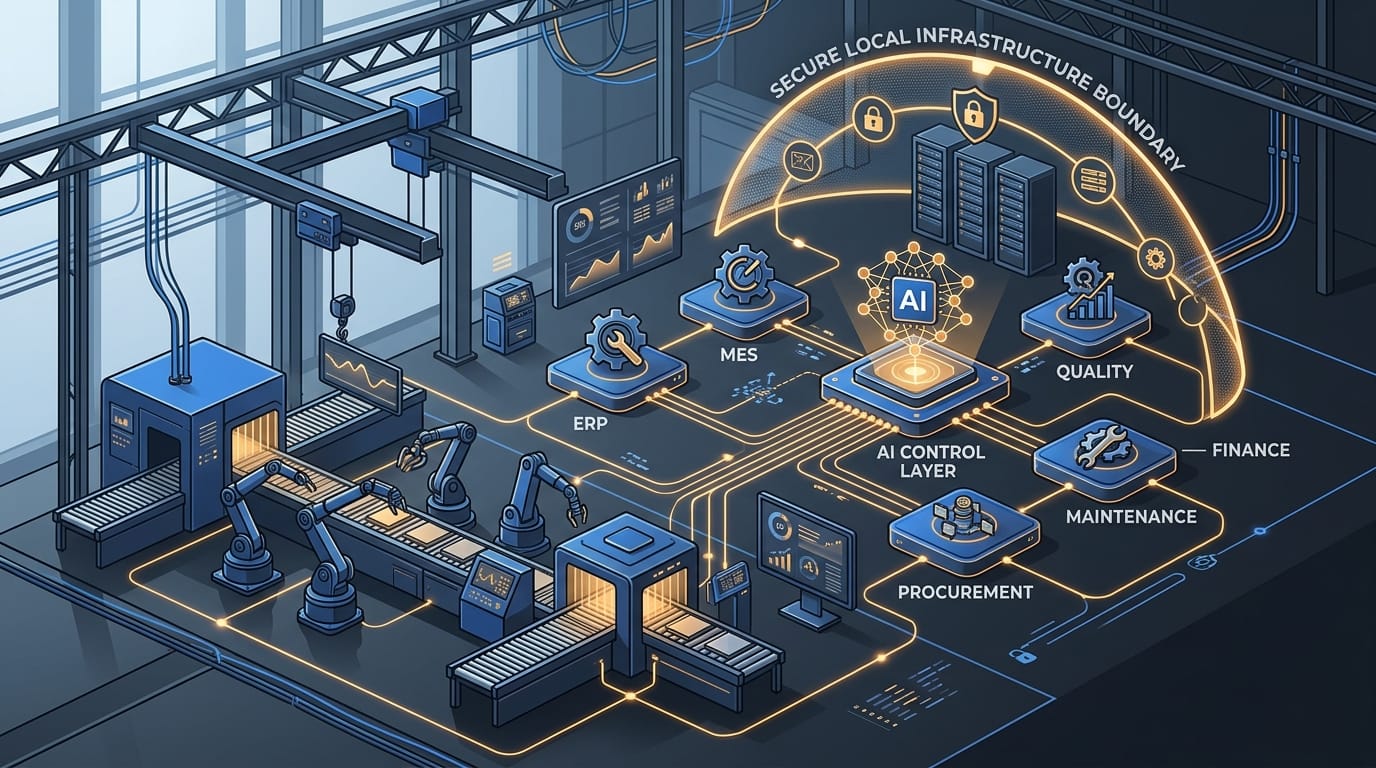

What does an on-prem AI agent architecture for manufacturing actually look like?

It looks less like software extension and more like a controlled workflow engine sitting between plant systems and enterprise systems.

The first layer is connectors. Without them, nothing interesting happens. A useful manufacturing agent needs governed access to ERP, MES, CMMS or maintenance tools, quality systems, document stores, procurement records, inventory data, and often email or ticketing flows. This is where most vendor demos quietly cheat. They show the model being clever after somebody has already done the painful systems work. In reality, connector design is the product. That is why reliable enterprise connectors matter so much: they determine whether the agent is reasoning over live operational truth or stale fragments.

The second layer is retrieval and context assembly. A plant-side AI agent should not merely pull raw records. It should gather the exact evidence needed for the workflow: order status, machine history, supplier ETA, quality holds, BOM substitutions, approval thresholds, or prior exception patterns. This turns the system from a search layer into a decision layer. A late-component workflow, for example, may need to weigh supplier reliability, current WIP exposure, alternate stock, cost impact, and machine schedule before recommending action.

The third layer is policy. This is where on-prem matters. Some actions should always require human approval. Some substitutions are allowed only for certain plants or customers. Some quality deviations can be routed to engineering automatically; others cannot. Some finance adjustments can be proposed but never posted without review. If those rules live only in a remote vendor product, the manufacturer is still renting judgment. The policy layer has to sit in a boundary the operator controls.

The fourth layer is execution. This is where most industrial AI pitches still feel thin. Useful agents do not stop at summarizing. They route exceptions, open tickets, draft supplier follow-ups, request approvals, classify defects, prepare reconciliations, and write outcomes back to core systems with auditability. That is the architecture InfraHive is built for: customer-controlled AI data processing and workflow automation running on infrastructure the customer owns, with zero ambiguity about where data moves and how actions are governed.

The fifth layer is measurement. If the system cannot connect its actions back to throughput, rework, expedite spend, service level, or finance impact, it is just faster confusion. This is why a measurement spine like MetricFlow matters. It ties automation back to economic outcomes rather than demo theatrics.

The better answer for an ERP-heavy manufacturing estate is not to pretend ERP disappears next quarter. The better answer is to keep the system of record where it still earns its place and insert a customer-controlled execution layer above the brittle handoff zone. That layer can run on infrastructure the manufacturer governs, coordinate across plant and enterprise systems, and replace one ugly workflow at a time.

Direct answer: An on-prem manufacturing agent stack combines governed connectors, workflow-specific context retrieval, a local policy layer, auditable system actions, and deployment control inside infrastructure the manufacturer chooses.

How do you implement this without another endless transformation program?

By refusing to start with a slogan.

The wrong kickoff is, "We need agentic manufacturing." That is how teams buy a roadmap instead of fixing a workflow. The right kickoff is choosing one cross-functional path where delay, manual coordination, and policy drift already cost real money.

Good first targets include purchase-order and invoice mismatch handling, maintenance triage for repetitive machine faults, quality deviation classification, spare-parts exception routing, supplier-delay response, or Tier-1 support requests from plants that keep bouncing between operations and IT. These are the places where ERP records exist but operational judgment still lives in people.

Implementation usually has three layers. First, evidence mapping: what systems hold trustworthy state, what data is late, what approvals exist, and what the business actually treats as decisive. Second, policy design: what the system may do automatically, what it may recommend with approval, and what must remain human-controlled. Third, rollout: one workflow, one plant, one region, or one business unit first, with aggressive measurement of overrides, cycle time, exception rate, service impact, and cost.

The forward-deployed model matters because every enterprise stack lies. The architecture diagram says one thing; the workflow in production says another. The best implementations start with a painful workflow and a small team that can move from connector setup to policy design to live feedback quickly.

Typical objections are predictable. "Our ERP environment is too customized." Fine. That is an argument for an execution layer, not against one. "We cannot replace everything." Good. Do not. "Our vendor already offers AI." Also fine, but an in-suite AI feature rarely sees the full economics of actions that cross procurement, quality, maintenance, operations, support, and finance. "We need a business case." Then start where the existing process already burns hours, throughput, or customer trust.

The migration path is almost always hybrid. Keep ERP as the book of record if needed. Replace the workflow layer first. That means the manufacturer avoids a giant rip-and-replace project while still clawing back the judgment work that causes the most delay. It also means connectors, policies, and monitoring become reusable assets for the next workflow instead of one-off project debris.

Direct answer: Real implementation starts with one painful exception workflow, maps the ugly reality first, builds local connectors and policy controls, uses a forward-deployed builder to bridge teams, and expands through measured hybrid rollout.

What results should manufacturers actually expect?

The first result is not some dramatic robot-factory fantasy. It is cleaner operational throughput.

When judgment-heavy workflows move into manufacturer-owned agents, teams spend less time stitching context together manually. Exceptions are resolved faster because the system gathers the evidence, applies policy, and prepares the action path before a human even steps in. Finance operations stop chasing documents across email and ERP notes. Maintenance teams stop losing time to repetitive diagnosis and scheduling friction. Quality teams get better triage instead of generic summaries.

The second result is control. A manufacturer-owned workflow layer is easier to inspect, audit, and adapt than a stack of SaaS add-ons. The same connector patterns that help maintenance can support finance or support. The same policy boundary can protect plant data and write-back rules across multiple workflows. That is where the economics improve: reusable capability instead of repeated subscriptions to thin interface layers.

The third result is strategic independence. Once the workflow layer is customer-controlled, the business can change systems beneath it over time without restarting from zero.

You can see the same pattern in customer deployment outcomes and security and deployment design. The first use case may be narrow, but the connectors, approval patterns, logging model, and control posture become reusable. One ugly workflow becomes a repeatable operating capability.

Direct answer: Expect faster exception resolution, fewer manual touches, better auditability, and a reusable automation layer that compounds across manufacturing, finance, and support workflows.

What does this mean for manufacturing leaders in Europe and the US?

It means AI advantage will increasingly belong to the operator who owns the boundary, not the operator who buys the flashiest demo.

European manufacturers will feel the sovereignty and governance pressure more explicitly as data-control and intervention-path questions rise. US manufacturers may frame the same issue through uptime, cost, resilience, and vendor-concentration risk. Either way, the strategic move is similar: keep the workflow intelligence near the systems that run the plant, and keep the policy layer inside a boundary you can defend technically and commercially.

The early movers will strip exception-heavy work out of legacy ERP flows and rebuild it as controlled agent systems that fit how factories run.

Direct answer: In both Europe and the US, manufacturers that own their AI workflow boundary will modernize faster and with less operational risk than those waiting for ERP vendors to solve the problem for them.

So what should a manufacturer do next?

Pick one workflow where people are still acting as the integration layer between ERP, plant systems, and reality. Rebuild that decision path first. Keep the system of record if you need it. Just stop pretending the judgment layer belongs inside an old transaction stack.

If you want a practical view of manufacturer-owned workflow AI on infrastructure you control, start at https://infrahive.ai, review deployment control and security, inspect connector coverage, and explore how this works for your stack. The strategic choice is not whether AI will enter manufacturing. It is whether your plant gets to own the part that matters.

Direct answer: Start with one exception workflow, own the control boundary, prove the economics, and expand from there.

Frequently Asked Questions

Why use on-prem AI agents in manufacturing instead of a generic cloud copilot?

Because manufacturing workflows often depend on plant data, local policies, and system actions that need tighter control, lower ambiguity, and clearer auditability than a generic hosted assistant can usually provide.

Does this mean replacing ERP entirely?

No. Most manufacturers keep ERP as the system of record and replace the judgment-heavy workflow layer around it first.

Which workflows are best to start with?

Exception-heavy processes such as maintenance triage, invoice and PO mismatch handling, supplier-delay response, quality deviation routing, and repetitive plant support requests are strong starting points.

What makes deployment succeed fastest?

A narrow first workflow, strong connectors, explicit policy rules, and a forward-deployed builder who can turn plant reality into system behavior.

What is the biggest mistake in industrial AI projects?

Treating the model as the product. In production, the real product is the connector boundary, the policy layer, and the governed action system wrapped around the model.